Loving Grace, Wrong Race

India has the right AI argument. Now let's build a mission that rises to its promise.

AI is now in everything, everywhere, all at once. And it is remaking our world with an impatience matched only by our indifference to the digital divides we never closed. Dario Amodei, CEO of Anthropic, wrote 'Machines of Loving Grace' in October 2024, perhaps the most serious attempt by an AI company leader to imagine what a world transformed by AI could actually look like. He talks of a world where AI compresses decades of biological progress into years, where he imagines the developing world growing at rates that could bring Sub-Saharan Africa to China's current per capita GDP within a decade. “Most people are underestimating just how radical the upside of AI could be”, he writes. But intelligence, he warns, 'isn't magic fairy dust'. AI ultimately lives in this world, with the slowness of our physical world and constraints from us, humans. The latter, I'd argue, includes our governance structures, political economy, and the technological inequalities we have decided to live with. That honesty matters. Because however powerful AI becomes, it cannot simply cut through the mess of the world it lands in.

Being the Class Topper Is Not Enough

Most people watching the AI race believe it is all about frontier labs: the Anthropics, the OpenAIs and the DeepSeeks of the world. That the first country to create what former US National Security Advisor Jake Sullivan called “God-like” Artificial General Intelligence will “win.” Jeffrey Ding, author of Technology and the Rise of Great Powers, thinks otherwise. He turns to past technological revolutions. The Soviet Union launched Sputnik, graduated twice as many scientists and engineers as the US, and spent a larger share of its GDP on R&D. It still collapsed. Conversely, the US was no class topper in the Second Industrial Revolution; Germany led in chemistry, Britain in basic research. Yet the US emerged as the clear superpower. It built a broad base of engineers, institutionalised chemical engineering as a discipline at MIT before its European peers, and created dense linkages between industry and academia that transferred knowledge from frontier labs to factories.

What matters, Ding argues, is not whether you create the best technology but how well you can diffuse it throughout the entire economy, raising productivity across the board. AI is a general-purpose technology (GPT), something which spreads throughout an economy and isn’t limited to any one industry. For GPTs, Ding argues, diffusion matters more than technological innovation for determining overall national power. It matters less whether you can build your own Silicon Valleys than if your Bengalurus ever reach your Byrnihats.

The Pragmatist Indian

India understands this, by choice or compulsion. Probably more compulsion than choice. When you cannot afford to compete with the frontier labs, you develop a sophisticated argument for why the frontier is the wrong destination anyway. As Srikanth Velamakanni, co-founder of Fractal Analytics, put it, “India’s Rs 10,000 crore AI mission is roughly what OpenAI spends in a weekend.” But the argument, it turns out, is largely correct.

Speaking at Davos, Ashwini Vaishnaw, India’s Electronics Minister, argued that nearly 95% of all AI use cases can be addressed with 20-50 billion parameter models and that ROI comes not from model size but from deployment. At the AI Impact Summit, he elaborated this point:

“Today models have already become commoditized. There are good models which are just maybe four or five months or maybe six months behind the topmost frontier models. They are now available in open-source.”

Nandan Nilekani, whom many have taken to calling India's de-facto CTO, is all on board with this argument. Nilekani talks of diffusion pathways, replicable playbooks built from lived deployment experience. Lessons from Maharashtra’s agricultural AI initiative, MahaVistar, were adapted for use in Ethiopia in three months. A parallel playbook reached dairy farmers through Amul in three weeks. Infosys has announced a partnership with Google, the Gates Foundation, and UNDP to develop 100 such pathways by 2030. “All of us who have a stake in AI being valuable to humanity have to accelerate diffusion,” he warned. “Or the consequences will be bad.”

Nilekani is also betting his money on “spoken AI in dialect of your choice”. Keyboard literacy was the bottleneck for PC-era services. Touch screens lowered it for smartphones. Voice in vernacular languages would remove it almost entirely, making AI accessible to populations that the loving grace of technology has never reached.

Perhaps. However, in government, the hand that writes the cheque rarely reads the keynote address. Ding argues that diffusion strategies are structurally hard to sustain. The benefits of diffusion — broader engineering talent, stronger industry-academia linkages, vernacular voice interfaces for farmers — accrue to dispersed interests. No single industry lobbies for them. They do not give you the reel material that a frontier model beating Claude at key benchmarks would. The result is a constant underinvestment in what actually matters for diffusion to work.

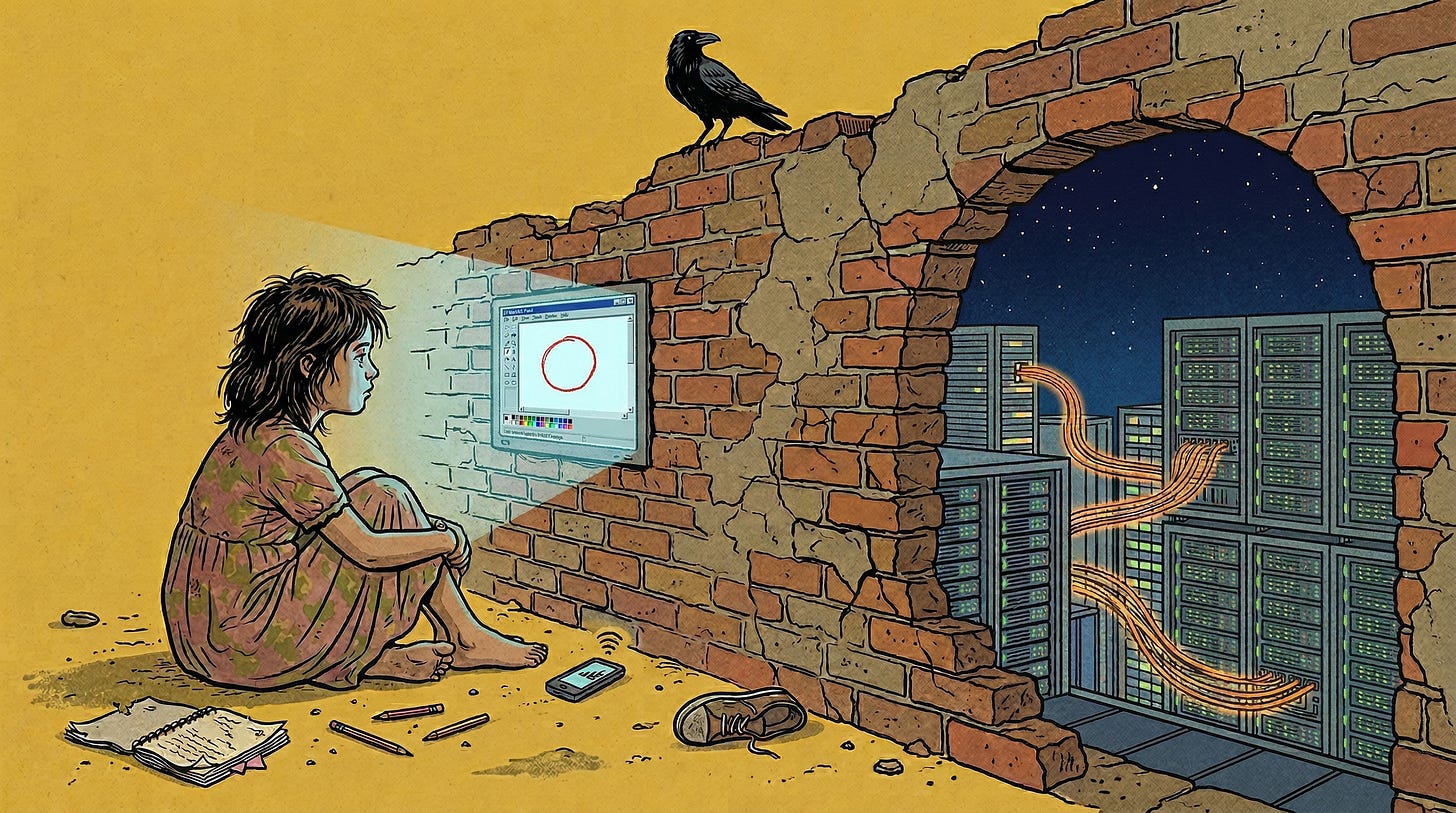

Everyone Else Gets a Hole-in-the-Wall

The good and the bad news is that none of this is new. Most of what AI needs to be useful, functional literacy, affordable devices, reliable internet, practical digital skills, was already pending work. Prerequisites that, had they existed, would have anyway lifted productivity and welfare on their own, without any AI in the picture. But AI promises to provide a much greater return on this long-deferred investment. And conversely, a much bigger chasm between the haves and have-nots if the to-do list of prerequisites remains unticked.

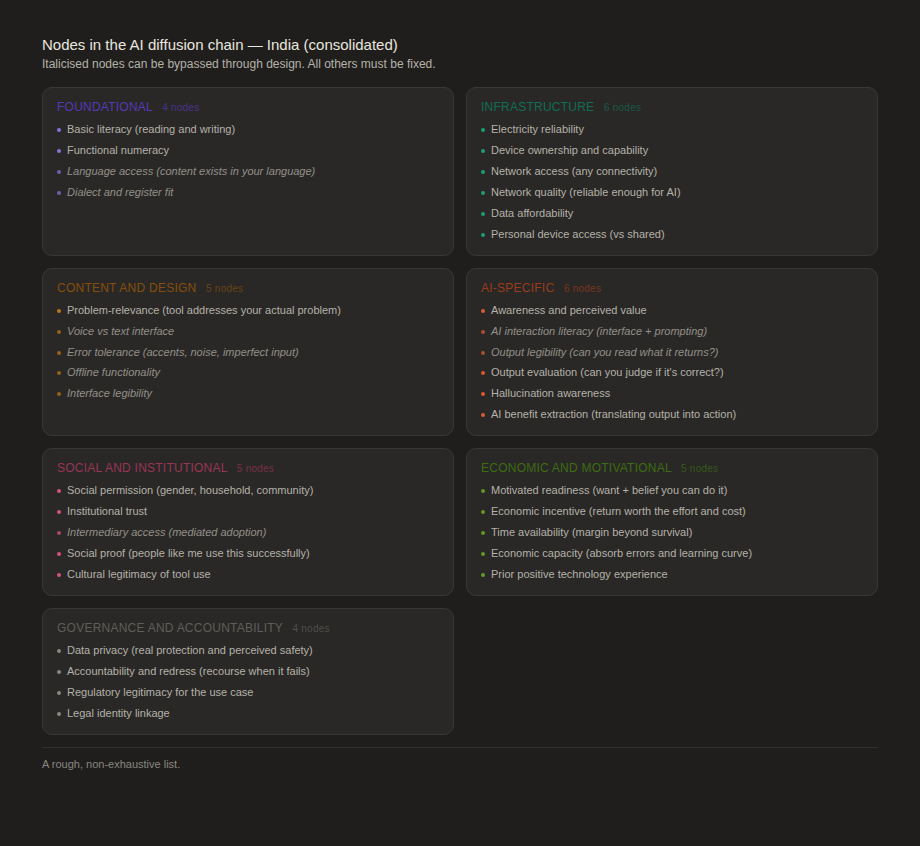

I like to think of the diffusion journey as a series of chains, each one a prerequisite for the next. And the breaks in those chains exist at every level: between states, between districts within those states, between households within those districts, and between individuals within those households. And cutting across all of this, by caste and gender, the gaps can be just as wide. Some have achieved basic literacy and numeracy for all. Others haven't. Some have a smartphone. Others don’t. Within households, the phone often belongs to one person, usually the adult male member, which means everyone else’s access is borrowed at best. Among those with a device, some have reliable internet. Others are on a connection that works only in that one corner of the house. Even where internet access exists, it may not be stable or fast enough. Then comes awareness of AI and what it can actually do. Then usability, which, for the vast majority who aren't comfortable in English, improves dramatically if the interface is in their own language, in voice, not text. And finally, the output must actually be useful, not marginally better but transformatively better, which AI can deliver but only if the models have been trained on the specific realities of Indian lives, Indian languages and Indian problems.

Jan van Dijk, a Dutch sociologist, mapped a similar logic in his work on the digital divide. He identifies four sequential barriers to technology access: motivational, material, skills, and usage. The key insight is that you cannot skip levels. A person without motivational access will not seek material access. A person with a device but no skills will not achieve meaningful usage. Each layer is a prerequisite for the next. Right now, several of those links are broken, and not the same links for everyone. Even if the frontier labs manage to 1000x the power of their AI models, it changes very little at the last mile.

Mark Warschauer, in Technology and Social Inclusion, opens with a case study close to home. This is the story of the Hole-in-the-Wall experiments conducted by Sugata Mitra, whom some people call a visionary and some call a charlatan. Mitra’s team installed computer kiosks in one of New Delhi’s poorest neighbourhoods. The idea was that children would teach themselves. But the internet rarely functioned, no content was available in Hindi, the only language the children knew, and they spent almost all their time on paint programs and games. Parents complained their children’s schoolwork was suffering. Warschauer’s verdict was blunt: minimally invasive education was, in practice, minimally effective education. The failure wasn’t the technology. It was the absence of everything else: content in the right language, human instruction, community involvement.

His second case study travels to Ennis, Ireland, which won a national competition to become an “Information Age Town” and received fifteen times more funding than the runners-up. Every family got a computer and an internet connection. The result was disastrous. The unemployed were told to sign in for welfare payments online and couldn’t figure out how the equipment worked. They returned to the office anyway. Some sold the computers on the black market. The three runner-up towns, with one-fifteenth of the money, actually had more to show for it, because they had spent on awareness, training, and existing community networks rather than on hardware.

Warschauer’s conclusion is that meaningful access requires four categories of resources working together — physical, digital, human, and social. Not necessarily in sequence, but in combination. Each reinforces or undermines the others. Invest heavily in one while ignoring the rest and you don’t just fail to help, you concentrate the gains among those who already had everything else in place: urban, educated, English-speaking. Everyone else gets a Hole-in-the-Wall.

The Sprint and the Marathon

India does need an AI Mission. Building some domestic capacity still matters, especially when training models tailored to India’s immense diversity does not always make great business sense. But the mission does not take the minister’s own argument seriously. The revised expenditure on the IndiaAI Mission for 2025-26 came in at Rs 800 crore, less than half the budgeted Rs 2,000 crore. Of what was spent, over 85% went to compute subsidies for model building: Sarvam, BharatGen, Soket AI, and others. Skilling, datasets, application development, the work that would actually mend the broken chains, received almost nothing. The mission alone cannot close the chains. There are long-standing non-AI problems — connectivity, literacy, electricity reliability, gender-equitable device access. They are governance problems that require funding and implementation across every tier of government.

Amodei ends his essay describing the world with AI’s loving grace as “a thing of transcendent beauty” and adds, “We have the opportunity to play some small role in making it real.” But the loving grace of AI will not arrive by itself. It will arrive, if it arrives, through the unglamorous work of fixing the broken chains, one node at a time. From Bengaluru, all the way to Byrnihat.

*The usual disclaimer: views here are entirely my own.

The Takshashila budget analysis you cite is damning in its specificity: over 85% of the IndiaAI Mission's actual spend went to compute subsidies for model building, while skilling, datasets, and application development got almost nothing. That's precisely the pattern Ding warns against ..... the diffusion work that actually messes with constituent interests gets squeezed out in favour of the photogenic "frontier" bet that produces ribbon-cutting moments. The chains metaphor is exactly right ..... each broken link compounds the one before it, and right now India is spending most of its AI budget on the wrong end of the chain. The honest question for the Mission in year two is whether it can resist the incentive to keep doubling down on what's visible and fundable, or whether it will finally get serious about the last-mile work that won't make anyone's press release.